AntiPolygraph.org Home Page > NAS Polygraph Review

Remarks of Dr. Drew C. Richardson

before the

National Academy of Sciences/National Research Council

Committee to Review the Scientific Evidence on the Polygraph

17 October 2001

RICHARDSON: I suppose it's only fair that I introduce myself and give you a little bit about my perspective. I have recently retired from the FBI. I was an agent for twenty-five years. I am a research physiologist, although I spent the vast majority of my career as a scientist in the FBI working as a physical scientist, and that is as a chemist and a toxicologist. You no doubt in the next hour, a bit, perhaps, will get that perspective from me. I was involved in polygraph research specifically for the FBI. My group associations -- agency associations -- largely have been with the FBI as well as DoDPI.

My formal involvement in polygraph research was in the late '80s and early '90s. I have not been involved since that time, formally. It's interesting that although I've had a day job in the last ten years since that time, I've been something of an unwitting, if not reluctant, social activist, I suppose. I've not looked for that role, but I've found myself in the position of having been contacted by several hundred people presenting either themselves or others as victims of some sort of polygraph, generally polygraph screening, and generally applicant polygraph screening.

So with that in mind, and the concerns that I have related to what may be the greater victim, and that is the United States and its national security, I obviously -- I present a perspective and a bias -- and don't refrain from it -- and will proceed along those lines. For those on the left side of the room who might be concerned -- they're interested in what it is I'm going to have to say after having been restrained from saying it during my career in the Bureau -- you can take some solace in the fact that over the last month, I've been somewhat distracted as a result of that which has occurred on September the 11th. As I indicated, my background is as a physical chemist -- a chemist and a toxicologist. I was formerly the chief of the FBI's chemical-biological response team, officially the Hazardous Materials Response Unit, and as a result, and particularly in the last several days with anthrax letters and so forth, have been very very busy with that, and so I've had to divide my time and have not spent quite as much time thinking about polygraph as I might, but nevertheless I suspect we have a few things that we can talk about today.

I don't know whether those sitting around the room received the memo that I sent to the panel members, but I discussed a number of things that I wanted to talk about. Okay. It's indicated to me that perhaps you have. As you can tell, I've suggested a number of topics for our consideration. Not that I plan on covering in any degree of detail any of these subjects -- it almost looks like a, perhaps a -- although from a different perspective -- a DoDPI course curriculum perhaps. But I paneled it in order to do this. I feel, having heard various presentations and having heard various aspects of these topics having been made in the past three or four public hearings, I guess two of which I've attended, it appears to me that it's very easy to take some of these things out of context and to miss some of the significance that may be attached to looking at them in group association. For instance, as I suggested, it makes little sense to talk about countermeasures -- and I want to talk about countermeasures and [word indistinct] -- perhaps even give a demonstration or offer to give a demonstration. But I think it makes very little sense to talk about polygraph countermeasures until one has looked at underlying validity issues for polygraph screening, because polygraph screening -- whatever strengths and weaknesses it may have, are those in addition to what Control Question Test polygraphs -- criminal specific issues -- might have. It makes little sense to talk about it without that. And I think perhaps the one thing that may have been discussed in my absence -- your private deliberations -- meaning I didn't attend, but I've not heard discussed -- is really the fundamental theory of polygraphy: how it works. I think that if we actually look at this we might learn a few things from an alternative theory perhaps. So, with that in mind, perhaps we'll begin there.

I would like to talk about the theory of Control Question Test polygraphy. I suppose also it's very important to define what we're going to be talking about today. Everything that we're going to be talking about -- validity and countermeasures and so forth -- is really dependent upon the specifics of polygraph application and polygraph format. That which is used widely in the federal government, and what' I'm going to be talking about, will be control question polygraphy. It's widely used in both specific tests -- specific issue testing -- as well as in polygraph screening. [word indistinct] the presenters from the operational polygraph programs have talked about Control Question Test polygraphy. So I would like to talk about that.

What I'd really like to talk about is, and I'd like to begin at the beginning, with whether or not there is an underlying fundamental premise for which we can practice. Is there a theoretical construct for this? And do we really need one? One of the things I found interesting with your meeting in Massachusetts, having listened to portions of the audio tapes and several of the speakers, is one of the speakers, a well-known advocate and uh -- academic advocate -- for the polygraph, Charles Honts, indicated in his presentation that he had no idea why it worked. Which I find to be kind of interesting. Of course, Charles is quite familiar -- Charles didn't mean to indicate that he had not heard numerous theories that are presented at DoDPI -- in fact, probably he taught those theories himself -- but that he was not particularly convinced by any of them. He saw no particular reason to believe in them. But what Charles would probably suggest is that not having any firm understanding or theoretical construct is not a roadblock to application [and] practice. Is that true? Well, of course, it is true. We know that until 1953, when the seminal one-page paper by Crick and Watson in the journal Nature that described the double-helix nature of DNA, we had no idea, we had no basis for, molecular biology or the study of molecular biology. Of course, that in no way prevented the daily cell replication and protein expression that's gone on within the species for day eternal. That being the case, clearly one can have practice without understanding. But the absence of understanding certainly does not justify practice or suggest it. In fact, the absence of theory may indicate flaws in practice. The absence of theory may be as a result of not looking sufficiently for alternatives.

So I would like to talk about the theory which is commonly used, and I think it's been mentioned a time or two -- it's been talked -- about polygraph practice, and that is fear of detection. Before I can talk about can talk about that, I need to specify a little bit how a polygraph exam works. In Control Question Test polygraph, and for ease of conversation, I'm going to refer to a probable-lie Control Question Test -- one can use a directed lie. A directed lie is used in fact in various screening applications, but for my purposes it probably doesn't make a great deal of difference. But for conversation's purpose, I want to specify what it is I'm going to do, and that is the Control Question Test, the probable-lie Control Question Test.

Well, what we've done, and again I will suggest that this has not been particularly clarified, perhaps even misrepresented, what is really going on. One asks two different kinds of questions in a probable-lie Control Question Test that one is actually scoring. There are several different types of questions in what may be a ten-question sequence of a polygraph chart, a polygraph exam. But the two which are [unclear] and which are scored are relevant questions and control questions. A relevant question or --a bank robbery, a bank robbery is the issue we're going to look at -- would be, "Did you rob the bank? Do you know who robbed the bank? Do you know where the money is?" and particularly investigative -- serious kinds of questions, largely an interrogatory form of the elements of the crime, which I'll bring up again. I think that polygraph examiners, by and large -- some of the best are examples of people who are very skilled interviewers, investigators, interrogators, and so forth -- that in polygraph, examiners at large, in the [unclear], are paramount. They sit in a polygraph suite largely not doing any investigation, and yet they ask these kinds of questions for these kinds of matters. Paired with relevant questions again are control questions. The control question for that would be -- a paired control question might be, "Prior to the age of eighteen did you ever steal something from someone? Did you ever steal something from someone who trusted you?" or, "Prior to 1995, did you ever steal something?" You have the ability with Control Question Tests to time-bar certain things and add certain distinctions in time. In fact, that's a positive thing.

Now, the theory, again, that polygraph people generally will talk about in terms of a mechanism for how this works is fear of detection. And the notion is that a guilty person will be more concerned with relevant questions and properly-set control questions will be of more concern to innocent people and that they will be concerned about possible deception, or being concerned that they may be telling a lie to these various things. Now, I think the competing, and perhaps a better, explanation of what's going on is fear of consequences. What I mean by this is, if I were to ask you, "Did you rob the bank on the corner of First and Main? (in fact, it hasn't been robbed, but if I asked you, you haven't robbed it?)." Well, when you answer that question and you answer the paired control question, what you're going to be concerned about is no the fact that you're going to be telling the truth to that relevant issue, but you are going to be concerned about that relevant question, because you're going to be concerned about the consequences. And the consequences are, if in fact you're found to be deceptive on that polygraph exam, that you're going to be subject to further interrogation, investigation, perhaps prosecution, perhaps conviction, and perhaps loss of freedom, family, resources, and so forth. I think it probably makes much more sense that this would be the case, and it becomes even more so as we look at other examples.

One perhaps can set a control question to compensate for the bank robbery example that I gave you. But there are other things that become much more difficult to do. For instance, if we're talking about child sexual abuse, if I were to describe the very graphic act and to ask you, "Did you do it?" again, the consequences of that are magnified not only with the considerations that I gave you about bank robbery, but the revulsion and the social stigma that goes with something like that.

Another example, and one that has come up at various times here -- perhaps others might care to comment about it -- and I'm happy to be interrupted or stopped or questioned -- [unclear] is that of the Wen Ho Lee case. There are a couple things about that case -- and I had nothing to do with this investigation [unclear] it's investigation or polygraph exam or anything else -- should begin with that. Well, one of the things which has been suggested in the lay press is that somewhere in the investigation of that, of the interview of the subject in that case, it was suggested to him -- it's like [word unclear] or his participation in certain actions or his having engaged in certain criminal actions may lead ultimately to his end being similar to that of Julius and Ethel Rosenburg. As I recall, they were electrocuted. Again, I don't know that to be the case, and I also don't know the sequence of events, but if in fact that occurred before a polygraph exam, there is no way in God's green Earth that man was not going to be concerned with the relevant questions on a polygraph exam. There are other issues that one could talk about Wen Ho Lee as well.

Probably the worst case example that I have ever heard is a case that occurred in the state of Virginia. This occurred a number of years ago and involved a man on death row. He was given a polygraph exam, as I recall, on the day he was to die, perhaps twenty-four hours before. And this was as a favor to him, and perhaps the motivation was good, but this was done by the then governor of Virginia, thinking this was one final opportunity for this man to clear himself. Well, the notion that an innocent person hours away from death is going to be more concerned about control questions, and lying on control questions, than the consequences of the relevant question, which is his impending death, is utter nonsense.

And so we're left with that. That being the case, I think that there are several implications for this sort of thing, assuming that this is true, or assuming that this is reasonable, but for sake of conversation, the fear of consequences is what is going on, I think that there are several problems to this. One thing that I don't think I've heard talked about here is the differential accuracy for guilty and innocent people, if in fact, what you've got is people being concerned about the consequences of relevant questions, this would tend to make the test more accurate for guilty people and less accurate for innocent people. There are various studies that [word unclear] this to be the case. Another aspect of this that I think -- I don't believe my colleagues in the world of operational polygraphy to be universally disingenuous. I do believe them to believe that that which they do for a living makes some sense, has some benefit, and so forth. But I believe that there's actually an explanation for what's going on, and they may actually be blind to some of the error that actually occurs.

Going back to the mechanism for how a polygraph exam is done in the federal government -- actually it's how it's done in the Bureau, and other places as well -- a polygraph examiner does not develop his own cases. He does not get his own polygraph examinees. Those people are brought to him by case agents, who conduct investigations and so forth, and bring that person to him. Well, in the case of the Bureau, and other people, I suspect, what happens is that a predominance of these cases actually are from people that the investigating case agent believes to be guilty. And I like to think that our investigative agents are good, and that in fact, that a high proportion of the people that actually end up in the polygraph suite are guilty. Well, if in fact that is the case, if in fact this fear of consequences mechanism that I'm suggesting to you is in fact the case, and, well, those two things would tend to make those exams outweigh the erroneous results that one would attain from innocent exams. You would have a higher proportion of guilty people, and the error that would occur with innocent people [unclear] quite well be overlooked for a variety of things.

Okay, another consideration with regard to this particular mechanism is there are experts with regard to research -- I'm not going to talk a great deal about field research, but that which has been suggested by Bill Iacono, Chris Patrick, and others, is that field-based research which is based on confessions tends to underestimate false positive results. And there are obvious reasons for this. I'm not going to go into that, but what I would like to talk about, because a number of the studies that are done, or even studies that are suggested now as the basis for evaluating polygraph screening, had to do with simulated crimes, laboratory... Thank you. But I'm going to suggest that anything that does not have sufficient consequences would 1) lack external validity, and 2) would tend to underestimate false positives.

What's a typical exam done? A typical exam has as examinees, in the academic world, undergraduate students, in the world of DoDPI, typically military recruits of one sort or another. These people participated in "crimes" clearly with little consequence. In the academic world, the typical crime would be to have somebody steal ten dollars from a desk, have it minimally investigated, and then polygraph. One of the things that has been suggested is a benefit to this to give some sort of motivation to this whole thing is to give a certain amount of money, and typically it's, you know, fifty or a hundred dollars for passing the polygraph exam. So what do you have here when you've done that? When you give a hundred dollars to pass a polygraph exam, you've had somebody commit a crime that amounted to ten dollars, which when investigated -- I've seen in mock scenarios FBI agents conduct interviews for ten dollar thefts and so forth, and of course you have people laughing about why in the world would an FBI agent be involved in such a thing. But beyond that, you have the ridiculous circumstance where you have people committing a crime not for the spoils of the crime, but for the benefits of the polygraph exam. Two things wrong with that. When people take a polygraph exam, what they're trying to do is avoid consequences, avoid problems. They are not in a lottery in order to win benefits. It's not a positive reinforcement, it's a negative reinforcement. And it's not five or ten times the initial crime. People commit crimes, again, for the spoils of the crime. So you have this rather bizarre sort of psychology going on in this whole thing, and this is very typical for these kinds of exams. And again what you tend to have, again, where you have reasonably high accuracies on certain academic studies -- the few peer-reviewed studies that are done -- it may well be, there may be some differential accuracy, but you may be having accuracies in the range of 85-90%. Well, it may well be that you have fairly high accuracies, appropriate and correct, correctly reveal the accuracies for guilty people, but the kinds of accuracies that Iacono and others suggest based on field studies -- they're approaching random chance in the field sort of chance, may well be the case for this, and they may not be revealed through the kind of work we're doing.

So, again, up until this point, everything I've said is basic Control Question Test theory when applied to a specific test. But I'd like to talk now about -- and again I really haven't heard this talked about -- is the issue of scientific control. What we're really concerned about on any given polygraph exam is not the accuracy rates determined for populations based on research. What we're interested in is what is the correct result on a given day and the probability of that being the right result. I tend to think that the numbers that we get from scoring algorithms right now are altogether ridiculous, based on false assumptions. Yes?

UNKNOWN: Just say a word more about the logic that you just mentioned a minute ago. You mentioned the unusual nature of the reward structure of some of the studies -- ten dollar thefts, hundred dollar positive rewards. Is the implication that such a reward structure would increase or decrease the validity of the test.

RICHARDSON: It would simply confuse it. What I'm suggesting is that the ten dollar crime itself would not present sufficient any sufficient motivation to commit the crime, and the hundred dollar reward would not present any serious consequences to being found guilty of a polygraph exam [unclear]. In the simulated crime the thing [unclear] I have a problem with it regardless of what you do. With a simulated crime, as with the polygraph exam, and a interview, and so forth, it doesn't go into further investigation, prosecution, and so forth, so you have additional problems. What I am suggesting -- that it's clearly a ridiculous exercise. It has absolutely nothing to do with what happens in real life. But what I'm particularly concerned about is the lack of consequences.

Scientific Control and Polygraphy

Okay, with regard to scientific control, my feeling about this is -- again the reason I've talked about fundamental theory is I think it's particularly weak and may be wrong and may be altogether misplaced. But my feeling is that there is a need for within subject, inter-exam controls, and that need becomes greater as the fundamental theory becomes weaker. It's inversely related.

So let's talk a little bit about scientific control and internal standards, particularly [unclear] and then talk about them in terms of the Control Question Test. Although the [unclear] will no doubt not be needed in this room, I've spent most of my life as a chemist, as a toxicologist, and I'd like to talk about an example out of forensic toxicology. And it has to do with the identification of cocaine, or particularly, its urinary metabolite, benzoylecgonine, found in urine, which is a very fundamental, very common, and every day sort of exam. The way that is done is that a certain quantity of benzoylecgonine, which happens to be -- this structure is meaningless -- except for the fact that it is only different from the analyte that you are looking for by five protons. This happens to be D5 instead of H5 here, so it weighs five atomic mass units greater than that which you are looking for.

Okay, so how does that work? Well, when one does a toxicological exam, one takes a raw specimen, a biological specimen, a urine specimen, does various extractions -- back extractions -- into aqueous organic solutions, concentrates, and there's a variety of things. And that gives us some sort of a separation technique, a chromatographic technique -- generally has chromatography -- and then some sort of an identification and quantification technique, perhaps mass spectometry.

So what happens? When one does that, deuteroylecgonine [?], the deuterated benzoylecgonine, is exactly like benzoylecgonine in every respect except for mass weight. That is, it has an equal solubility in water, in organic solvents, it's extracted out of urine the same way, it has the same affinity for packing materials and columns [?], it has the same vapor pressure, it has the same physical, chemical pressures in every respect. Well, when I do an experiment and I end up with a certain concentration of benzoylecgonine, what does that allow me to say about the experiment that I have done? Well, it allows me to do two things. And most importantly, it allows me to say that first, it worked. If I don't see benzoylecgonine in that final extract, the thing didn't work. And secondly, it allows me to make some estimation about the presence or absence of the analyte I'm looking for, benzoylecgonine, and relate it quantitatively in terms of concentration so that I can make some determination.

Okay, then. With that in mind, let's come back to what we have at hand here, that is, the Control Question Test. What we have again is a relevant question and a control question. Again, you [?] an innocent person of this bank robbery, I'm [?] an innocent person of this bank robbery. The issue is the relevant question, "Did you rob the bank?" versus the control question, "Prior to the age of 18, did you steal something?" [unclear] What is the relationship between those two questions for you, an innocent person? Hell if I know. What is the relationship for me? Well, hell if I know. What's the relationship for you on the second time that test is done for you? Again, I haven't a clue.

We do the exam, we get physiological measures from the three channels -- the four recordings -- the three channels on the polygraph exam. We can make comparisons between big bumps and small bumps on the elecrtrodermal channel, but what do we really know in terms of scientific control? We don't know anything about it. We don't know whether the experiment worked in the first place, and we have no ability to really relate relevant to control questions.

Now let's go [unclear] polygraph screening and validity issues. I hope you will indulge me, because I know you did this before, and I know Al Zelicoff did a considerably more elegant job of doing this than I will through the consideration of [unclear], and that is, sensitivity-specificity sorts of things, but I would like to talk about base rate considerations one more time. And I'd like to put in in focus for other people in terms of say, the Robert Hanssen espionage case. Before doing that, there's -- I guess I'd like to define once and for all what a screening test is. I think that's often misunderstood. I've heard it described as a test that addresses many issues -- that has multiple issues as opposed to specific-issue tests that perhaps has [unclear] effect. That's obviously not wrong. A screening test can have one issue, and then a specific-issue criminal test can have multiple. That's not the difference. The difference is that a screening test as opposed to a specific-issue test, [in] a specific issue test, you have a known crime, or a known incident, about which you want to look. The only issue is, the person before you, the polygraph examinee, how is he connected to it? Is he a witness? Is he a subject? Is he a co-conspirator? And is he deceptive about any of those particular associations?

With screening, general screening, that is, which is what we in the federal government do right now, in terms of both applicant work and [unclear] employee -- periodic and aperiodic testing. What we're doing is, we're asking people, generally large numbers of people, about one-to-multiple issues, none of which are know to occur. And that's the key to the whole thing: none of which are known to occur. The examiner has no knowledge that the given issue has been done, so he's screening. What's he doing? He's engaging in a fishing expedition. Well, when one does that, one has -- over and above everything that I've described to you so far about fundamental theory about control question tests -- about scientific control -- applies to polygraph screening. But there are additional concerns, additional issues.

One of the ones which Charles Honts and I would agree about -- Charles Honts happens to think that there's some merit to doing specific-issue testing -- I obviously think there's very little merit to doing it -- but we both agree that there's very little merit to doing polygraph screening. His rationale for his difference of opinion between the two different types of applications is that with polygraph screening, what you have is a diminution in the differences between stimulus types -- between relevant and control issues. If you're going to ask -- in the Bureau for instance, we are concerned with our applicants about drug usage -- if I'm concerned about whether or not somebody has used marijuana more than ten times, it's hard for me to say -- to differentiate -- that time period between a time period that I've asked them if they've betrayed a trust while in high school, because there may be an overlapping time period where the marijuana issue occurred. So it's harder to separate these sorts of things.

Okay, with regard to base rates, let's talk about post-Aldrich Ames. Let's talk about Aldrich Ames, and we're going to look for Aldrich Ames. Let's assume, and again I apologize for the fixed, less elegant, less fluid sensitivity-specificity sorts of [unclear] so that we can fix this [unclear]. Talk about a perceived accuracy of 90% for a polygraph exam -- which, you know, in their wildest dreams would they not expect me to suggest that it's 90% accurate -- but if one accepts that, and one is looking for someone who's committed espionage, let's say we're looking for Robert Hanssen in a population of 10,000 FBI agents, what we will have then, if we screen 10,000 people, and we have that sort of accuracy, obviously we will have a nine-out-of-ten chance of having a deceptive chart for Robert Hanssen if he happens to be in that population. We also will correctly identify 9,000 out of the 10,000 FBI agents as innocent. We obviously will have deceptive charts for 10,000 -- or rather for 1,000 out of the 10,000 innocent people. So we'll have a thousand false positive results to the one true positive result that we had for Robert Hanssen.

Charles Honts, in one of his Forensic Reports papers dealing with a screening study he had done in the late '80s with Gordon Barland and others -- Steve Barger, I think -- at DoDPI, indicated that he thought that there was a 2% chance of catching a guilty person -- getting a spy -- with the result of what he had seen. Obviously, I would agree with Charles that it's a very very weak [unclear] you aren't going to catch anybody by doing polygraph screening. But I think it's obviously much worse than that. The example I gave you, obviously, we have .001 chance of catching a spy. So what happens? You have a needle in a haystack. So you have the one Robert Hanssen, presumably with a deceptive chart. You have a thousand false positive results with the chart, and you're trying to find him. Well, in the case of Robert Hanssen, let's look at it. So, you're going to interview these people. In the case of Robert Hanssen, he happened to be a good Catholic with a fair reputation in the neighborhood, and many kids, and demographics not unlike our former director of the FBI. I suspect that he probably wouldn't be real high on the [list of] people that you'd pursue. So I suspect what would happen is, had you actually done that, had Robert Hanssen actually been given a polygraph exam, he would have been cleared, and as a result of having taken a polygraph exam, he would be in a better condition than had he not taken one. In other words, what I'm trying to say is, I don't think polygraph screening is a neutral. It's a negative, because what's going to happen is that you'll have people getting through it who are given a free ride until the next year -- the next 5-year reinvestigation. You're going to miss those people. Yes?

UNKNOWN: Is that adjudication, or is that a problem up there?

RICHARDSON: The adjudication is given such a difficult problem that it can't possibly handle it. They're the ones who are technically going to make the formal mistake, but they're put in that position through the considerations of polygraph screening. So I think it's untenable -- the adjudicators I think are put in an untenable...

UNKNOWN: Drew, I [unclear] in fact, Bob Hanssen did everything he could to ensure that he was assigned in such a way in the Bureau that he would not have to take a polygraph because he was concerned about the polygraph.

RICHARDSON: Perhaps --

UNKNOWN: [unclear] I know it for a fact.

RICHARDSON: The point is, you and I -- I don't know whether you have or not -- but I went my whole career with the exception of the polygraph exam that I had to take in order to enter into DoDPI -- it's very easy, it has been very easy. One does not have to take overt actions in order to avoid taking a polygraph.

UNKNOWN: My only point is that using that as an example is very speculative. [unclear] the fact of the matter is that Hanssen had taken a number of measures to ensure that he did not have to be administered a polygraph [unclear] because he was concerned with not being able to pass it.

RICHARDSON: Okay. Well, I'll come back and talk about deterrence, but for the moment I want to talk more about validity issues. Okay, with regard to research studies and so forth that's been covered, one of the questions -- and I think it was handled in the public session two sessions ago, was -- "Can specific issue polygraph studies -- peer reviewed or not -- be used as a basis for evaluating the accuracy of polygraph screening?"

I think it was agreed that perhaps for a variety of reasons, because of the things that I've mentioned -- base rate considerations, differences in the relationship between relevant and control as they are being [unclear]. As I recall, following that John and Patricia presented their recent -- the results of their study of what polygraph screening studies have been done, and as I recall perhaps over six or seven studies that have been done, perhaps six out of the seven being done by two researchers. I probably don't need to go further into those studies, because those two researchers, Sheila Reed and Charles Honts, were here last time discussing their own work, and to a large extent they discredit -- tended to discredit -- their own work and are both very much opposed to polygraph screening.

I think it was suggested perhaps that there's an ongoing study -- there was some time ago -- at DoDPI. I guess it's appropriate for me to bring this up at this time. And the question is, "With past studies, as well as future studies, who is it appropriate to be doing these kind of studies -- validity studies?" [unclear] and I'm happy to work here with others to comment about it -- DoDPI exists for one basic reason: it exists to serve the federal polygraph community, basically, its training needs, its research needs, and to a lesser extent, its operational needs. More fundamentally, DoDPI exists for one and only [one] reason, that is, because polygraph exists. Polygraph exists, to a large extent, to the extent that it is found to be valid. Is it proper for a group with such a conflict of interest to be entrusted with this mission? I think not. As I suggested before in other settings, that sort of conflict of interest is comparable -- tantamount -- to having a research institute connected with the tobacco industry looking at nicotene addiction or the relationship -- causative relationship -- presence or absence -- between cigarette smoking and lung cancer. I think it's altogether inappropriate. I don't think it can be defended in any sense. And this has absolutely nothing to do with my feeling about the people at DoDPI now or the people that I knew ten years ago. Absolutely nothing. It's just the conflict of interest that shouldn't exist.

What DoDPI can do in terms of serving their clients is, they can do various utilitarian studies, and these things have been done [unclear] over the years, that is, the kind of study where, is the polygraph exam better if you ask Question 3 before Question 4, or that sort of thing -- [unclear] studies. Well, that sort of thing can be done, and that's basically a utilitarian thing. But in terms of basic validity studies there's a major conflict, and I suggest to you, as you consider the past, and as you make recommendations for the future, that you consider other alternatives to that sort of thing. In fact, I would suggest that you consider having people like yourselves do it, having gone through DoDPI. The most valuable thing that you all could have done in the last 18 months, I think, is to go through their 12-week school. I tend to think that you would come to some of the conclusions that I have. Maybe not. But nevertheless, I think you would be in a better position to do some of the things that you need to be doing now.

Okay, let's talk a little bit about countermeasures, and counter-countermeasures, and so forth. What are we talking about? Well, basically a fairly simple regimen, but I guess before I talk about even the basics of how one does it, and I'm going to make an offer to you all, to do it for you at some point, to demonstrate it, and to allow you to appreciate the demonstration by actually doing it yourselves in a mock crime scenario. But I'll say more about that in a minute.

Well, what is a polygraph countermeasure? Basically, if you were to listen to operational people in the polygraph world for the last umpitty-ump years, countermeasures are those things that are used by guilty people in order to be found non-deceptive with the results on a polygraph exam. In fact, I think that's absolutely wrong. That is clearly one type of polygraph countermeasure, but what a polygraph countermeasure is, is an intentional attempt to manipulate a polygraph chart in order to produce a non-deceptive result. Can you see that? If you believe anything that I've said so far, you will believe that the error involved with polygraph affects both innocent people and guilty people, those who both have a need in order to -- for different reasons -- in order to have non-deceptive results.

So, I and others justify our positions, our involvement in this, in that clearly -- although I'm not interested in helping a guilty person become non-deceptive in anything -- I'm quite unwilling to allow an innocent person to be a false positive under any circumstances. So what I would really like is through my efforts, and the efforts of other people -- my efforts haven't really begun yet -- but through my efforts, is that the polygraph people, if they haven't realized that this is invalid, that they're going to realize that it's unworkable, once the whole world understands how this thing works. And I plan, in a variety of different forums, to tell people that.

Okay, well, simple-minded sort of thing here. How does this work? Well, one could do -- there are essentially two tasks that one could [unclear] a polygraph; these are [unclear] by Dr. Honts and Reed last time, so I'm not going to go through this. We all, of course, agree that countermeasures can work, and I think all three of the preceding expert speakers agreed that there was no chance whatsoever that polygraph people would likely detect polygraph countermeasures. Charles Honts maintains that in order to do it [unclear] reading various materials, and I'll tell you about some of the materials available to you. Charles Honts indicates that you need to do -- to spend perhaps 30 minutes doing the sorts of things that he has trained people in his laboratories in the mid-1980s [unclear] master's program at Virginia Tech. I wouldn't argue with Charles, but what I do think that has been taken out of context is how much effort 30 minutes on the part of a would-be employer of countermeasures is. Even the people in polygraph that devoted 10 to 15 weeks of their life going to polygraph school -- Charles Honts and others -- [unclear] in his graduate school experience and graduate work and so forth, have devoted [unclear] the past 15 years. A half-hour on the part of anybody is certainly not a great use of time. In other words, I think [unclear] anybody.

Okay, how does one do it? Again, back to our basic model of a control-relevant question, a probable-lie Control Question Test. A relevant question, "Did you rob the bank?" A control question, "Prior to the age of 18, did you steal something from somebody who trusted you?" It's often misrepresented in the literature that perhaps the way to do this is to attenuate responses to relevant questions. It can't be done. Although it can be done by certain people in a limited fashion, it can't be done within the time frame of a polygraph exam. So what you have to do is, you have to augment the responses to the control questions. Now, it's a little bit more complicated than that, and we'll talk about the complications.

Well, what are the source material for how you do this? None of the source material is mine, but it's out and available, and I think it's been discussed. Charles mentioned some of these things the last time. A former polygraph examiner in the state of Oklahoma, Doug Williams, has published a manual something to the effect, "How to Sting" -- or "Sting the Polygraph" -- something to that effect that describes polygraph countermeasures. Gino Scalabrini, who's sitting in the back, and George Maschke are the webmasters of AntiPolygraph.org as well as the authors of The Lie Behind the Lie Detector, a polygraph publication, and in that publication it described how to do it. [end of tape; portion of recording missing]

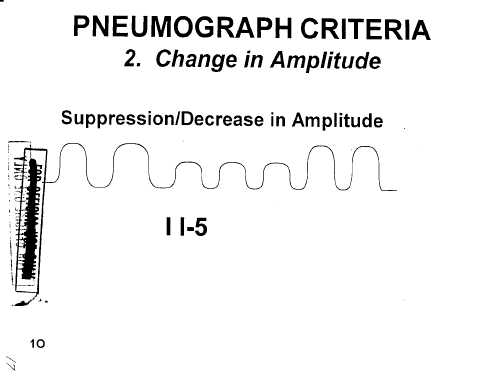

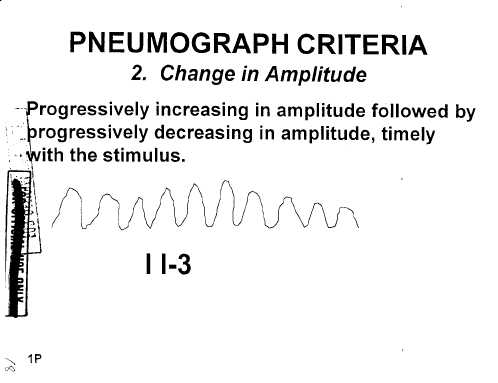

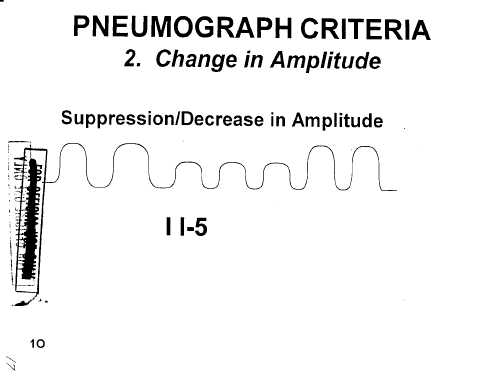

...and if you look at what they say, it's a real response, and look at it. Well, the information that I've provided you, and what is listed as Test Data Analysis, pull that out. This is information that comes from DoDPI. It contains a variety of information. I just pulled out, randomly, just a few pages out of the 61 pages available to you on the site, and it describes some theory and a very general cookbook procedure for scoring in terms of spot analysis of a spot on a test, looking at a control-relevant question [pair]. It talks about what is a meaningful response, or what is a meaningful chart recording using a pneumograph, and then, that which we see on that which is labled page 16 is what they consider to be an example of a scorable response on the pneumograph channel. And what we have here, what DoDPI [unclear] what is contained within this document as a whole, well, it was published ten years ago, and has probably [unclear] ten years before that, presumably it's not now, is that across the three channels, that is, the respiration channels, the electrodermal, and cardio channel, there are roughly, as I recall, 22 scorable responses across those three channels. You happen to be looking at one here, for the pneumographic channel, which is a suppression/decrease in amplitude.

Okay, so with that in mind -- you happen to see another one here, a change in amplitude:

-- and then, some information about scoring, decision criteria and so forth. So, the key then, is to produce a response -- and to produce a response that they recognize as a response, that they teach as a response. Can you do that? Yes you can do that. I can get a polygraph; they can get a polygraph. They know you can do this as well as I can. I can get a polygraph -- I think they have nine responses for the pneumographic channel -- I've said and I will demonstrate for others at some time, that I can at will, just sit and produce at will, any one of those pneumographic responses. And there's some benefits to doing that.

Well, let's talk about the kinds of countermeasures one might use. Typically, one talks about various categories. One category is pharmacological, mental, physical. Another category would be direct and indirect. And another category I like to look at is good and bad. We'll talk a little bit about all three. In terms of how one might do that, what is considered pharmacological, although all these channels have an autonomic, involuntary component, and [are] therefore affected by drugs that affect the autonomic nervous system, you can do that differentially in the cardio channel, but the problem with it is that you tend to affect the control questions and the relevant questions, and by and large what you're going to end up with is at best inconclusive results, and it's probably kind of meaningless. The second reason that it's meaningless is you don't need to do it -- it's perfectly easy to do it using mental countermeasures or physical countermeasures.

Mental countermeasures are, at the appropriate time within a Control Question Test, to look at those things, to think a variety of thoughts. You can either ask yourself what the square root of thirteen [is] at the time, do mental calculation, that sort of thing, or pi times whatever. You can think various negative thoughts. You can think about your significant other cheating on you. You can think about being bitten by a cobra. You can think a variety of things, and you can readily produce responses and you can do it in a [unclear] time frame.

The other thing, with regard to physical responses, there are a number of things you can do. One, of course, in terms of all of this has to take into account polygraph counter-countermeasures. Counter-countermeasures are really simplistic. Physically, what you have is what's called a [sensor?] bar placed in front of the two legs on the polygraph chair -- you're really not measuring -- it's not a motion detector. What you're measuring is weight shift. So, the commonsense thing to this is that you're not going to do things out on the periphery. You're going to do things along the midline so that you're not shifting weight. So, tongue-biting and anal sphincter contraction, and a variety of things actually work very well.

One of the things that I indicated that you have the ability to do [is] direct and indirect sorts of things. Again, because all of the channels have an autonomic involuntary component, doesn't make it particularly interesting or easy to do direct based on that. But of course, one channel has a somatic, voluntary component, and it's the pneumo channel. And again, it's very easy to replicate all nine things that are contained in DoDPI's handout. And again, that's what I would do, and I would do it at the appropriate time.

If you look at what's page 16 in your handouts for a period of reference -- I don't know that this has been described to you -- well, what you see here in addition to the cartoon tracing, you see two vertical lines, a negative line, and a number 5:

What that represents, if it hasn't been told to you, is the vertical lines are the time period. What you have on the x-axis is time, and response magnitude/amplitude [are] on the vertical axis. What you have with this nomenclature at the bottom, the two vertical lines represent the beginning and the asking of the question, that is, "Did you rob the bank?" Blank, blank. The "minus" represents a negative response by the examinee, and the number 5 represents the 5th question answered on this particular sequence of the polygraph chart. And so what they're looking for typically are responses obviously that [unclear]. If the sequence is not known to the examinee in general, a typical format will have these questions presented so that the identity of the questions are known to the examinee, but the examinee typically does not know the order of the questions. So typically, what you're looking for is you don't want responses occurring before the question. Typically, a half-second to two or three seconds after the beginning of the asking of the question. And it varies depending upon various things you're looking at. The duration of various responses varies from very shallow cardiovascular to electrodermal. If you're actually looking through your binders, you can see obviously cardiovascular tends to be last over the inter-question intervals of what -- okay.

Polygraph Countermeasures Challenge

So, enough of that. I indicated something about willingness -- I'm happy to -- if the polygraph people here want to take exception with anything that I've said [unclear] I'm more than happy to address that. But what I would suggest to you all, if anybody wants to do this is -- I know we have 13, 14, 15 people here -- what I'd be willing to do is [unclear] in the absence of polygraph people at some point -- is to take two or three of you to show you countermeasures, to demonstrate them to you, to let you demonstrate to yourselves that you can do it, and then we will actually have you all -- all of you -- participate in a simulated crime [unclear]. I know you've all taken stim tests [unclear] what you saw was absolutely meaningless. It has absolutely nothing to do with a polygraph exam, and I'll tell you why. But what I think -- it would be very useful for you to see a simulated crime using the control question test because that's basically what you're being asked to evaluate in terms of polygraph screening. So what I would suggest that we do is I teach two or three of you to do polygraph countermeasures, to do a simulated crime, a standard one that -- perhaps one done at DoDPI. And then the professional polygraph examiners, whether it be DoDPI instructors, the federal agency polygraph examiners, [whether] it be well-known civilian examiners -- anybody -- we go down there, and we see -- we do a polygraph exam. Some of you are going to be programmed guilty. Some of you will be programmed innocent. The issue will be, can they determine where the countermeasures are? Which of you used countermeasures and where did you do it?

What I predict will happen is that they will fail absolutely miserably, and that they will falsely accuse some of you of using polygraph countermeasures. I can almost guarantee it. But the proof is in the pudding.

UNKNOWN: Rather than us check it, do you have an article, literature, that you would point us to -- that is a good, scientific study that demonstrates countermeasures.

RICHARDSON: No. I don't think I've seen anybody suggest what I've just suggested to you. And that is -- to tell you the truth, I have no idea why DoDPI released this [unclear]. This is the way I would go about doing it. This has been available for six or seven months. Yes?

UNKNOWN: If it's the case that, as you suggest, mental countermeasures can be used by thinking appropriate thoughts [unclear] at appropriate times during the test, during the control questions -- has anybody given any -- that you know of -- given any thought to having the [unclear] test, have their attention occupied by carrying out some attention demanding task while they're being tested? Signal detection, or counting backwards by threes, or any such thing that would make it more difficult to employ the kinds of mental countermeasures that you have been describing?

RICHARDSON: I think the possible compound of that is it probably might make mental countermeasures more difficult, but it might also, if one believes the theory that polygraph people have about what it is they're detecting, it might make it difficult to detect the initial --

UNKNOWN: I was just asking.

RICHARDSON: I don't think it's --

UNKNOWN: We have heard claims that countermeasures can be detected pretty reliably, although research showing whether that's true or not is hard to find. Do you know the basis of those claims, of the -- any research bearing on those claims?

RICHARDSON: I can tell you my experience at DoDPI ten years ago. What was done was that, at that time, [unclear] take the motion sensor bar and so forth, and the polygraph chair, and then tell somebody to do a given countermeasure while being polygraphed -- physiology being monitored and so forth. You can ask somebody, "Do you see this?" You do this, and you tap your toe, and you tap your finger, and you press your toe, and so forth. Under those circumstances, generally -- the peripheral things -- you can see them. But there's no attempt whatsoever to see if -- unannounced -- if you can see polygraph countermeasures that were not told in advance [unclear]. There's no indication --

UNKNOWN: Could I ask the question a little more generally? Is anyone in the room able to point to, um, poly--

UNKNOWN: I'll just give you a couple of [unclear] anecdotal things --

UNKNOWN: No. [unclear]

[crosstalk]

UNKNOWN: Other than --

UNKNOWN: [unclear] And I can tell you a story.

[crosstalk]

UNKNOWN: We sent an examiner to the DoD polygraph countermeasures course three weeks ago. It ended on a Friday. On a Monday he gave his first test after attending that course. His first examination, he called it countermeasures, the test. And in the post-test interview the individual admitted that he saw the vacancy announcement for the particular agency, he saw it had a polygraph requirement, and so he began studying the websites on how to beat the polygraph. He employed the countermeasures that he took off the website, and the examiner stopped the test, said, "You're doing countermeasures." And he said, "You're right. You got me."

RICHARDSON: [unclear] won't deny is [unclear] somebody who admits [unclear] is stupid in the first place--

UNKNOWN: The question is ability to do countermeasures. [unclear] This week the individual at hand, and he [had?] many tests with a sister intelligence agency for employment, and he admitted, was called by that sister intelligence agency, had he employed countermeasures? We ended up testing the person earlier this week, gave several tests. They looked very unusual, very [unclear], and each time we did the test, we told him he was doing something to try to thwart the outcome of that examination. And the short of the story is, after many tests, and many days, and many hours of working with this individual, he finally said, "Well, I just didn't think I could get through this on my own. I don't trust it because of what I've read on the Internet. You've got me. I'm doing X and Y." We let him [unclear] for a day, gave him a test, he stopped doing that stuff, and the outcome was fine.

DR. STEPHEN E. FIENBERG (NAS POLYGRAPH STUDY CHAIRMAN): Are there recorded rates of such detection? Not a scientific study, just [unclear] summaries, um--

UNKNOWN: Some do. I mean, well that's just like four in the thousands we've given. It's all happened here in the last few months--

FIENBERG: I'm -- well, let me do this differently. I'm -- this is not to be argumentative. I've been reading --

[crosstalk unclear]

FIENBERG: There are two studies done by Charles Honts and collaborators, one of which points out that people by [unclear] reading about it, but not trained, failed to implement it properly. But with 30 minutes' training, but trained by, you know, professionals, are capable of changing it. Now, are there comparable studies in the works? This is Kevin's question. Again, the governing issue of detecting it. Honts' studies only say that they lead to changed outcomes on what the detection is vis-a-vis the original test. And we're now talking about one layer deeper, about being able to evaluate what somebody is doing or not doing in that context. I have--

[crosstalk unclear]

FIENBERG: We have not seen any.

UNKNOWN: I think probably the only countermeasure studies being [unclear] anywhere [unclear] your into a classified arena.

FIENBERG: Okay, so there is nothing in the published literature?

UNKNOWN: Correct.

[crosstalk unclear]

DAVID M. RENZELMAN (DEPARTMENT OF ENERGY POLYGRAPH PROGRAM CHIEF): I'd just like to make an observation. All of our examiners went through the countermeasures [unclear] and Dr. Barland and his staff, who is the recognized countermeasures person in this country [unclear] he trained our examiners, and I alluded to this when you came to [unclear] facility. And they taught our examiners how to practice countermeasures. And we tested each other. I don't have a person on my staff that's not an experienced examiner. We caught every one. Every one that was practicing [countermeasures]. A hundred percent. You then--

RICHARDSON: Are you willing to take my challenge?

RENZELMAN: I didn't interrupt you. And I told you this before. And there are studies, but they are classified, for obvious reasons. Because if they were unclassified, they'd be on AntiPolygraph.org, and that doesn't make sense. But I think you were offered the opportunity to go to the place where they are, and you were offered an opportunity to have that briefing, and I recommend that you get that.

FIENBERG: We have a scheduled classified briefing out--

RENZELMAN: And I would also like to say that the examiners that Dr. Honts used were not examiners. They were college students. There's a big difference.

RICHARDSON: I think actually, if you go back and read his work, he did his own work. He did--

RENZELMAN: I've worked with Dr. Honts for years. I know him. He'll tell you the story.

RICHARDSON: I think also with regard to Charles, Charles, he differentiates between two things, that is, the polygraph examiner's ability to detect countermeasures and -- versus -- the effective use of the countermeasure. Charles [unclear] I believe he indicated when he spoke here last time that he believes that anybody can apply countermeasures without any training whatsoever. He even [unclear] although he didn't -- somewhat discredited, I suppose -- that which was contained on AntiPolygraph.org. But he credited, at least to the extent he said that he didn't believe that proper use of those things would be detected by a polygraph examiner. What he believes, that the training is of value is, is to produce an effective result, that is, getting a non-deceptive result. And again, his opinion is that this can be done in thirty minutes' training. Then again, I wouldn't argue with that [unclear]--

[crosstalk unclear]

UNKNOWN: [unclear] to think about a reference point for this, because it is a -- an area that's very difficult to talk about. And it's -- I've already spoken to some [unclear] why someone would choose -- and it is a choice to use countermeasures -- and what [unclear] motivation might be. Kind of helpless with the personnel selection area, where social desirability [unclear] prevalent. What is the proper thing to do with someone who has been identified as using those -- conscious or unconscious -- um, motivations in an environment where the consequences -- getting hired or not hired -- [unclear]. I think there is some similarities there, and I think that --

[crosstalk].

What do you do with this type of employee or potential employee? Do you have an obligation, or a --

UNKNOWN: It's extremely [unclear] familiar with FBI pre-employment. Most people who apply to be an FBI agent [unclear] police and public [unclear], that sort of police-related [unclear] is a unique occupation, that this is something often people want to do their entire lives. It's a huge part of their self-identity. And so if I want -- I'm applying for an FBI agent -- I will do everything I can to get an advantage, the same way the [unclear] courses [unclear] SATs may or may not work, but [unclear]. Many people would believe that's -- it would -- that they should do things to make sure they pass the polygraph. Which may of course cause them to fail, that the same [unclear] may disqualify [them] from the FBI, because they're approaching this as something that's very important to them and their job. The same reason why they would use socially desirable answers on the personality questionnaire. And the question of whether you want to bounce people out of selection because they did that, it is a policy question which I don't know that the [unclear] rationale. But [unclear] official psychological perspective, you're taking a selection sample of high stakes -- every exam like that, people would tell things that they hope -- they think -- will help them pass the exam. And I believe a lot of people engage in countermeasures because they are worried that if they don't, then they may inadvertently fail the exam.

UNKNOWN: I think Mike Roberts in California has developed some evidence of [unclear] psych test [unclear] a large portion of police applicants --

UNKNOWN: Oh yeah. It's, it's--

UNKNOWN: In fact, now that is a negative factor [unclear] predictor. It is a predictor to the type of employee, not that they were lying about their drug question, or something like that. But it is part of the construct of this person, and then that becomes a problem to avoid later. So there may be some [unclear] evidence that -- even that almost becomes a scale predictor of some kind. And I don't think we have an answer as to what you do when you -- I think in some cases we do have a -- it's not dissimilar to a [unclear] school that has kind of a reputation. It has the luxury of saying, we're only going to take five people this year. And we get 5,000 applicants. You have a lot of luxury as to [unclear] people off for very minor things that they would be accepted in other schools and so forth. As your selection criteria goes up, as it becomes more critical then the policies do [unclear] they say, "Well, you know?" And a lot of policies -- correct me if I'm wrong -- at least in the personnel selection going towards -- [unclear] come back next year. So it's not in that sense. It's, you know, I'm not sure why this is happening. Because also a lot of [unclear] cross out just have a psych report, you know, with a high L or [unclear] or something like that you're not going to eliminate from the process any more than [unclear] eliminate from the hiring process for a suspected countermeasure.

It is, I think, fair to say [unclear] because people are being -- probably trying to beat these processes -- [unclear] to protect its own citizens [unclear] probably [unclear] become more sensitive, and there is a lot more quality control that goes into DoDPI's [unclear] so no suspected chart is passed on by one examiner and say, "I suspect countermeasures, the're not going to get hired or they're not [unclear]."

UNKNOWN: I wondered if you had any comment on [unclear] that the relevant/irrelevant technique is more robust against countermeasures than the control question technique.

RICHARDSON: That just refers to a different sort of thing. The theory again to [unclear] Control Question Test [unclear] produce responses to control questions. Basically, the way relevant/irrlevant -- I'm glad you asked this so I could say it on the record -- the way relevant/irrelevant tests are used, if they're used meaningfully at all [unclear] indication that they're not, they're just, whatever you think is in the chart. But agencies in recent years have done it by Rank Order Scoring of responses. So what you want to do is you want -- obviously, the only thing that are going to be scored in relevant/irrelevant tests are the relevant questions. Irrelevant again are not scored. So all you've got is one stimulus type. The key to that is producing responses, again, intentional responses, to different relevant quesions. They're not, they're not as [unclear], just a different thought process [unclear].

Okay, I think I will have to comment about a personal sort of thing, and I would like to say for the record that I think that anybody who knows anything about the Control Question Test, as a matter of self-preservation that person has got to use countermeasures. A person who knows something about a Control Question Test is in a far worse condition than somebody who knows nothing at all. If you know the deception that's involved, in fact, if you know the question identity, a relevant and a control question, you have no ability -- at that point, you know, the game is up. You have got to produce a response to a Control Question Test. The only way that this thing possibly could work is if polygraph examiners are successful in deception, trying to make polygraph examinees believe the control questions are relevant. If you know it, you have no choice but to produce -- so, I have no qualm whatsoever.

UNKNOWN: Is that because it invokes a lying response? Or is that because -- earlier, you had said the theory of the control question technique is fear of detection, fear of consequences. Knowing about this, [unclear] you wouldn't necessarily [unclear].

RICHARDSON: I think that if you know that a control question is not relevant -- if you know it is a control and you know it is not related to the matter under hand, whether it be a bank robbery or an issue concerning an applicant [unclear] and so forth, if you know what the real questions are, then you are going to know what the consequences are. And you are going to be concerned with those consequences. If you know the difference between the control and the relevant questions, if you know the control are not the important questions, then you're going to be concerned. If fear of consequences is the issue, and you understand the question nature, then you're going to respond to the relevant questions. The only thing that you can possibly do to take this thing out of freefall error is to produce responses to the control questions.

Okay, moving on. We talked a bit about deterrence and utility. It's often been said to me that, even if everything I've said up to this point is true, that there might be some merit to doing this because [unclear] utility of polygraph screening. With regard to deterrence, we'll begin there. One of the cases that's probably been talked about more than any other in the last decade is that of Aldrich Ames [unclear] while committing espionage. It's been argued as to whether or not he passed or failed those exams. And assuming that he passed those exams, it's been argued as to whether this is some kind of anecdotal evidence as to whether -- or a lack of validity -- on the part of polygraph screening. I don't know. I have an opinion on that, but I haven't seen the chart. But what I do know is that it very clearly speaks to the lack of deterrence. He clearly was not deterred. If in fact Aldrich Ames was deterred, based on what he did, based on the polygraph exam, heaven help us in terms of the damage that he did cause.

The other thing that I'd point to in terms of deterrence, and particularly with the Bureau -- the Bureau began its polygraph screening program largely in 1994, looking at some applicants. Prior to that time we did not do it. Other agencies -- intelligence agencies -- NSA, CIA, DoD -- prior to that time DoD less than [unclear] but other agencies have done it. What I would suggest if one wants to look at the deterrent effect is, look at the twenty years prior to 1994 for the FBI and the Agency. Look at the number of penetrations they had versus the number of penetrations we had. If one looks at that, there isn't any deterrent effect whatsoever.

Also, it's often said the deterrent effect is demonstrated by talking to some of the major spies. It's something called Project Slammer, which has occurred with major spies, and it's said that some of these people had indicated that they had tried to avoid taking the polygraph exam, or they would, given the opportunity to do things -- to not take a polygraph exam. I would suggest that that sort of testing, it's really very self-serving. These are people that the polygraph community wouldn't believe for a second if the issue was damage assessment. They'd want to polygraph them. So why in the world would they believe them when it comes to something like that. That sort of thing is nothing but a self-serving statement [unclear] captors.

At any rate, I think there's not a great deal of evidence to show that there's much deterrent effect based on polygraph exams. [unclear] may be true with recent arrests dealing with espionage [unclear] fully disclosed and so forth.

UNKNOWN: [unclear] personally got involved in some of these operations at the Bureau. And I can tell you from firsthand experience as the case agent in an espionage case [unclear] as we were investigating the case -- as we were investigating the case, the particular individual did not [unclear] for sensitive positions because he would have to take a polygraph examination. [unclear] in terms of generalization.

RICHARDSON: All generalizations are negatives.

UNKNOWN: And again, I'd just point out, this case [unclear] when in fact [unclear] had this deterrent in terms of individuals [unclear]. Yes, they had access to sensitive information, but they could have [unclear] polygraph, they acknowledged it was a deterrent.

DAVID L. FAIGMAN (PANEL MEMBER): With this observation, [unclear] but if I were a spy, and I was concerned about exactly the concerns you're after, which is that there's random error in the process, I might avoid the polygraph not because I'm afraid of being caught as a spy, but I might avoid the polygraph because I'm afraid that they're going to -- there's random error -- and therefore I have a very nice position here [unclear] that there's any positives to avoiding it that's not [unclear] unless you really believe in countermeasures. And if I really believe in countermeasures, then I would seek the polygraph, because then I would -- so it sort of cuts both ways.

RICHARDSON: That's exactly what I would do. The other countermeasure we haven't talked about -- we've talked about physical, mental, pharmacological, and so forth [unclear]. The other countermeasure is a behavioral countermeasure, and that is, regardless of what the result is, you just say you didn't do it. And that may have been all that Aldrich Ames did. He just talked his way out of it. And I think you've got a very good chance of doing that [unclear].

I think that if I were a spy, as opposed to somebody else, if I had something I really wanted to hide, I probably would seek the polygraph. The reason being is, the minute I got through it I'd have five years of protection. I would do my thing with impunity, so probably --

FIENBERG: I'd like to [unclear] and come back, before I lose it and we go on to yet another topic. The discussions that the Committee has been having, we've been asking questions about predictive validity, in the following sense, and it goes back to the conversation that Kevin and Andy were having. So maybe there's something that could help us on this, either in that area, or directly in the polygraph.

We're talking about a series of exams that are asking about people in the past: events that have occurred, or things that people did, and we're asking whether the polygraph or some other instrument, such as a screening exam for police employees -- could be a paper-and-pencil test, or anything else -- can detect that information. You made a statement about use of -- the relative value of -- detecting deception there to predicting future behavior. Big question: are there studies anywhere on anything related to polygraph-like exams that go from predicting past events, past behavior, training on something that somebody has done, or has thought about, or whatever it is, to predicting future events or future behavior?

ANDY RYAN (CHIEF OF RESEARCH, DEPARTMENT OF DEFENSE POLYGRAPH INSTITUTE): I don't know of any specific study that looked at that. There have been several [unclear] psychological [unclear] and polygraph and polygraph outcome, and whether or not there's any correlation.

[crosstalk]

FIENBERG: So, I would begin by saying, if we had an Aldrich Ames or a Hanssen that may have been spies last year, it might be well that we would all agree that if we predicted next year they would also still be spies, and so, in that sense, past behavior is future behavior. But without detecting spies per se, we're detecting deception on an exam, other kinds of deceptive behavior that may or may not relate to the question at hand, like the use of countermeasures in the taking of an exam because I was worried because I was worried they would ask me the kinds of questions that I'd respond oddly to, so I went to polygraph dot org -- AntiPolygraph.org -- and I read about use -- so that I wouldn't expose myself inappropriately, but I'm as honest as the day is long, and you detected me because I was fearful that my nervousness was going to betray me. Is that something you want to catch? And why? And what is that indicative of as a predictor of future behavior? So, I give that as just a starting [crosstalk]. The question is, are there any studies out there? What can we say--

RYAN: Complex question. [unclear] predictability of these measured traits, whatever they are, their relationship to past [or] current behavior. Certainly I don't care about the [unclear] certainly comes to a question of other things, but I think that one of the things not directly related to polygraph but, you know, almost to a [unclear] deterrence effect as opposed to, okay, we have a polygraph program here, so I'm not going to go to work here. I'll go apply for a job somewhere else. My personal experience in law enforcement is that would happen [unclear] agencies where there is no polygraph or is no psych [unclear]. I think that there is at least -- the personality resarch, there is literature to lead us to believe that there -- you don't wake up in the morning and decide to become a spy. You don't wake up and say, "Today I'm going to commit espionage." You might wake up today and say, "I'm going to kill myself. Crash a plane." But it is something that takes conscious thought over time. People develop into these kind -- these types of behaviors. And whether or not we have any real hardcore proof of early predictors that people would do that -- I don't see that -- but part of the personnel security process is the constant monitoring of these behaviors. It's like getting caught smoking as a teenager. You're going to eventually do it behind the barn one day. You always think your parents are watching. There is a deterrence there. So if there are behaviors -- security violations of the lesser, I guess, quality -- is that a reason to continue the polygraph program? So we discover that in a polygraph [unclear] an employee inadvertently or purposefully took material home that they shouldn't have. It violated a policy. It's not really a termination offense, but then, if that kind of behavior continues and grows, like thieves -- they start with small, petty crimes and end up with the felonies -- so these things can grow. So that probably is where the research would go. And I don't know of anything that's [unclear] polygraph [unclear] because polygraph has always been designed to do one thing: direct future investigative resources on something that's discovered by past or current behavior. So its validity or utility never has been looked at.

I would like to, if I could, [unclear] I feel a need, Drew, to ask -- the questions that you now refer to -- several cases that are ongoing or have been detected, some, in the past -- are you referring to these things out of personal knowledge? And if so, why, if you're not referring to that out of personal knowledge, why not divorce the [unclear] science data [unclear]. For instance, you made [unclear] statement about [Project] Slammer. Do people in Slammer report, as they do, that they avoided polygraph? How do you know that?

RICHARDSON: [unclear] in public, but it's been said to me [unclear].

UNKNOWN: I'm as interested in [unclear] argument as anybody -- maybe more so. Argument, for sure [laughter]. I understand what's been said about the last two topics, countermeasures and deterrence, is that there really isn't any scientific evidence that -- what we've been talking about are rationales, reasons [unclear] might or might not be, and on and on and on. I was hoping that there, since it's referred to in the handout, that there may be some evidence that we can talk about with respect to P300s. I believe [unclear] talk about it or not?

RICHARDSON: Yes.

UNKNOWN: [unclear]

RICHARDSON: I planned on talking about a number of other things before I got to that, but --

UNKNOWN: Okay.

RICHARDSON: but I do finally want to talk about that word. And actually the principle inventor is here as well, Larry Farwell. Perhaps you might want to hear -- you might want to hear from him. At any rate, we will get to that.

I am -- as I have been a critic of Control Question Test polygraphy, that is, emotion-based polygraphy, I have long been a proponent of concealed information polygraphy, whether it be on [unclear]. But, moving on, we talked a little bit about deterrence. Let's talk about utility. What is utility? Utility is generally associated with the obtaining of admissions and confessions, somehow in connection with the polygraph [unclear]. Well, how do we look at that? These admissions and confessions, are they -- one, are they real? Are they meaningful? Or are they not? Let's assume for the moment that they're real and meaningful. One of the questions that I would ask -- and I don't think has been answered [unclear] -- is, whether or not -- there's nothing to indicate that anything that's real or meaningful would not be obtained in the absence of a polygraph exam. That is, a skilled interrogator may well have obtained it in the absence of a polygraph exam. There really are no studies to indicate that there's really any true benefit -- that there's anything [unclear].

Now in terms of whether or not these things are really useful, there have been -- we a history of, over the last decades, various agencies that show annual reports claiming numbers of admissions and confessions for polygraph exams [unclear]. By and large, I think we have programs now -- continued programs in order to detect espionage, major crimes, major national security breaches--

[crosstalk]

UNKNOWN: Are there reports, or is there any data, showing the rate or proportion of confessions or admissions under polygraph examinations versus a rate or proportion of confessions or admissions under other interrogation techniques that do not involve the polygraph?

UNKNOWN: [unclear]

UNKNOWN: What would be the alternative? What alternative techniques [unclear]?

UNKNOWN: Background investigation.

UNKNOWN: Background investigation of the [unclear].

UNKNOWN: That's a good question.

UNKNOWN: [unlear] I can spend an hour, [unclear] hours here, interviewing with the polygraph, or I can have an interviewer talk to or [unclear] without a polygraph.

UNKNOWN: Several of the intelligence community agencies found that about 80 percent of the people, adjudicative information that they gave came from the polygraph process, not the background investigation.

UNKNOWN: That isn't -- the question was, are there any -- are there any comparisons like that [unclear] interrogation, discussion without persons -- with the polygraph?

RICHARDSON: I think in order to do that meaningfully you'd have to be matching [unclear] the data, essentially the same people doing it. Right now, background investigations in the Bureau many times are done by, you know, contract investigators that certainly don't correspond to the best criminal investigators/interrogators [unclear]. I doubt that's been done. I have not [unclear].

RYAN: [unclear] again where that happens is sometimes [unclear] not going to be a controlled study [unclear] where people in the application process provide certain [unclear] sometimes they decide during a [unclear] interview to reveal information that has not been revealed prior to that, knowing that that's [unclear] motivator. Why did they do that in the [long passage unclear] application causes it to happen.

UNKNOWN: I think that this really bears on something Drew said right at the beginning of this discussion, which is an interesting question, which is, do polygraph examiners hamstring themselves by forcing themselves into the fixed mode of interrogation caused by the procedures allowed under the polygraph setting again, thereby not use whatever expertise they know -- interrogation and/or detecting of lies otherwise by doing so? I think you said something like that at the outset, and I don't know if anybody comments on that.

UNKNOWN: The admission rates following polygraph tests are astronomically high, and that follows the best agents and the best detectives not being able to get those admissions through the course of the investigation. That's what you're [unlear].